Artificial intelligence regulators in the UK are “under-resourced” in comparison to developers of the technology, the Commons science committee chairman has warned.

The Science, Innovation and Technology Committee said in a report into the governance of AI that £10 million announced by the Government in February to help Ofcom and other regulators respond to the growth of the technology was “clearly insufficient”.

It added that the next government should announce further financial support “commensurate to the scale of the task”, as well as “consider the benefits of a one-off or recurring industry levy” to help regulators.

Outgoing committee chairman Greg Clark said he was “worried” that UK regulators were “under-resourced compared to the finance that major developers can command”.

The report, published on Tuesday, also expressed concern at suggestions the new AI Safety Institute has been unable to access some developers’ models to perform pre-deployment safety testing that was intended to be a major focus of its work.

The committee has called on the next government to name any developers that refused access — in contravention of the agreement at the November 2023 summit at Bletchley Park — and report their justification for refusing.

It adds that the Government and regulators should safeguard the integrity of the election campaign by taking “stringent enforcement action” against online platforms hosting deepfake content which “seeks to exert a malign influence on the democratic process”.

Former business secretary Mr Clark said it was important to test the outputs of AI models for biases “to see if they have unacceptable consequences”, as biases “may not be detectable in the construction of models”.

Commenting on the report, Mr Clark said: “The Bletchley Park summit resulted in an agreement that developers would submit new models to the AI Safety Institute.

“We are calling for the next government to publicly name any AI developers who do not submit their models for pre-deployment safety testing.

“It is right to work through existing regulators, but the next government should stand ready to legislate quickly if it turns out that any of the many regulators lack the statutory powers to be effective.

“We are worried that UK regulators are under-resourced compared to the finance that major developers can command.”

In its report, the committee states that the “most far-reaching challenge” of AI may be the way it can operate as a “black box” – in that the basis of, and reasoning for, its output may be unknowable.

The MPs add that if a chain of reasoning cannot be viewed, there must be stronger testing of the outputs of AI models as a means to assess their power and acuity.

The committee states that the conclusions and recommendations of the report apply to whoever is in government after the General Election on July 4.

In its last report of the current Parliament on the topic, the committee writes: “It is important that the timing of the General Election does not stall necessary efforts by the Government, developers and deployers of AI to increase the level of public trust in a technology that has become a central part of our everyday lives.”

It adds that any new government should be ready to produce AI-specific legislation should the current approach “prove insufficient to address current and potential future harms associated with the technology”.

The Department for Science, Innovation and Technology said the UK was taking steps to regulate AI and upskilling regulators as part of a wider £100 million funding package.

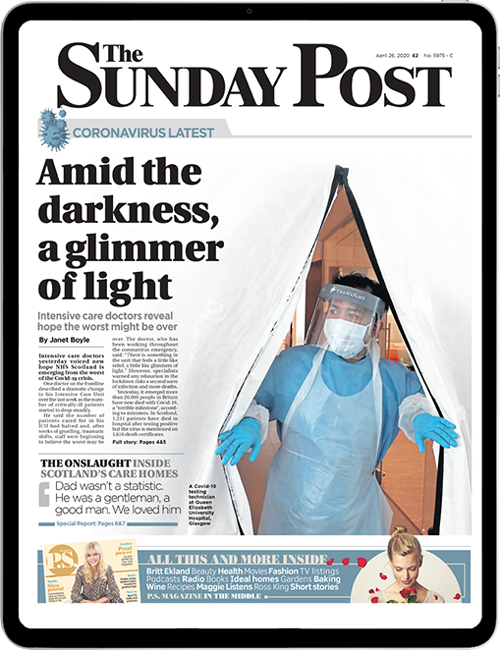

Enjoy the convenience of having The Sunday Post delivered as a digital ePaper straight to your smartphone, tablet or computer.

Subscribe for only £5.49 a month and enjoy all the benefits of the printed paper as a digital replica.

Subscribe