Social media giants have pledged hundreds of thousands of pounds to the Samaritans charity in a bid to rid the internet of self-harm videos and other damaging material, Health Secretary Matt Hancock said.

Representatives from Facebook, Google, Snapchat and Instagram were summoned by the Government to meet with the charity to identify and tackle harmful content, including that promoting suicide.

The summit in Whitehall came three weeks after the Government announced plans to make tech giants and social networks more accountable for harmful material online.

The maiden summit in February resulted in Instagram agreeing to ban graphic images of self-harm from its platform.

Speaking after the behind-closed-doors meeting, Mr Hancock said: “I met the main social media companies today and they have agreed to fund the Samaritans to identify what is harmful and then to put in place the technology on their platforms to find harmful material and make sure it is either removed or others can’t see it.

“The amount of support is in the hundreds of thousands.

“The crucial thing is that we have an independent body, the Samaritans, being able to be the arbiter of what is damaging content that needs taking down so all tech companies can follow the new rules that have been set out.”

Social media companies and the Government have been under pressure to act following the death of 14-year-old Molly Russell in 2017.

The schoolgirl’s family found material relating to depression and suicide when they looked at her Instagram account following her death.

Mr Hancock went on: “I feel the tech companies are starting to get the message, they’re starting to take action.

As part of this partnership, we hope to carry out research on online self harm and suicide content to inform best practice guidelines that can be adopted by social media companies to reduce the impact of harmful content

— Samaritans (@samaritans) April 29, 2019

“But there’s much more to do … we also spoke about tackling eating disorders and some anti-vaccination messages which are so important to tackle to ensure they do not get prevalence online.”

In a statement, a spokesman for Facebook, which also owns Instagram, said: “The safety of people, especially young people, using our platforms is our top priority and we are continually investing in ways to ensure everyone on Facebook and Instagram has a positive experience.

“Most recently, as part of an ongoing review with experts, we have updated our policies around suicide, self-harm and eating disorder content so that more will be removed.

“We also continue to invest in our team of 30,000 people working in safety and security, as well as technology, to tackle harmful content.

“We support the new initiative from the Government and the Samaritans, and look forward to our ongoing work with industry to find more ways to keep people safe online.”

Samaritans chief executive Ruth Sutherland said: “We look forward to building a strategic partnership with government and the world’s leading technology companies that will help us all tackle the issue of dangerous online content relating to self harm and suicide together.

“An innovative research programme will be the foundation for building our shared knowledge on this complex issue.

“We need to know more about how certain content affects different people.

“We all have a role to play in suicide prevention and, by working together, we believe this hub of online excellence will drive meaningful change on an issue that needs urgent attention.”

The online harms white paper sets out a new statutory duty of care to make companies take more responsibility for the safety of their users and tackle harm caused by content or activity on their services.

Compliance with this duty of care will be overseen and enforced by an independent regulator.

Failure to fulfil this duty of care will result in enforcement action such as a company fine or individual liability on senior management.

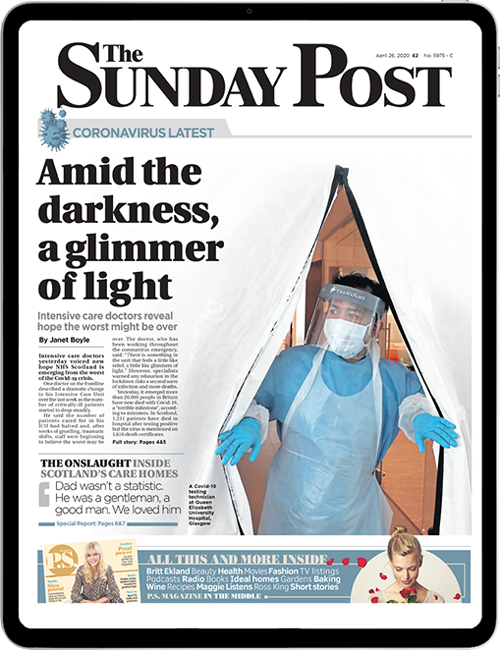

Enjoy the convenience of having The Sunday Post delivered as a digital ePaper straight to your smartphone, tablet or computer.

Subscribe for only £5.49 a month and enjoy all the benefits of the printed paper as a digital replica.

Subscribe